AI Made Manageable: Core Concepts and a Framework for Primary Care

By: Justin Wolting, Manager, Product Development and Innovation, Amplify Care

There’s no doubt that AI in healthcare is moving fast – faster than most clinicians and their care teams have time to keep up with. At this year’s HIMSS Eastern Chapter Conference, I gave a presentation that focused on how clinicians can cut through the noise: helping them understand the types of AI they’re hearing about, how to evaluate them responsibly, and how our upcoming Amplify Primary Care AI Framework will support safe, practical adoption.

If you’re feeling overwhelmed by the constant stream of AI announcements, you’re not alone. And the good news: you don’t need to understand everything. You just need to know the essentials that impact patient care.

AI 101: Foundations and Applications

AI is the overarching technology enabling machines to simulate certain aspects of human intelligence – learning from data, understanding language, reasoning, and even taking action. However, it’s important to note that AI today is not a single technology; it’s a constellation of capabilities.

In primary care, it’s helpful to think of AI in two layers: the foundational technologies/concepts that power it, and the practical applications clinicians are likely to encounter.

Foundational technologies and concepts:

- Machine Learning (ML): Algorithms that learn patterns from data to make predictions or decisions without explicit programming.

- Natural Language Processing (NLP): Technology that enables computers to understand, interpret, and generate human language.

- Large Language Models (LLMs) – a subset of NLP: Advanced deep learning models, generative by design and trained on large-scale text datasets to understand context, summarize information, and generate text.

Practical applications:

These are the types of tools clinicians are most likely to encounter in their workflows. They build on the foundational technologies and concepts above.

1. Generative AI

- What it is: AI that produces new content (text, images, or summaries) based on user input (foundation is primarily LLM/NLP, sometimes ML).

- Examples: Summarizing visit notes, generating patient instructions, drafting referral letters, creating educational materials.

- Clinician takeaway: Reduces documentation and communication burden, but clinicians must validate outputs for safety and accuracy.

2. Agentic AI (still largely experimental in healthcare)

- What it is: AI that acts autonomously toward defined goals, making decisions and adapting in real time (foundation is primarily ML, with potential NLP integration for communication tasks).

- Examples: Autonomous care coordination tools, intelligent scheduling systems, smart patient follow-up assistants.

- Clinician takeaway: Can streamline workflows and care coordination, but introduces higher stakes for accountability, safety, and oversight.

3. AI Clinical Decision Support (AI-CDS)

- What it is: Tools that analyze patient data to guide clinical decisions or highlight risks (foundation is primarily ML, sometimes NLP for interpreting notes).

- Examples: Predictive risk models, digital twins, population health panel management tools.

- Clinician takeaway: Supports evidence-based, data-driven decision-making, but requires careful evaluation for bias, accuracy, and clinical relevance.

AI is not only becoming more powerful; it’s becoming more accessible. This power brings opportunities – but to borrow from an old Marvel adage, with power comes responsibility.

Responsible AI: A Practical Lens for Clinicians

With AI becoming deeply embedded in care, responsibility isn’t optional – it’s required. Canada’s Pan-Canadian AI4H principles and global frameworks share key themes that clinicians can use as a quick checklist:

- Safety and oversight: Does the tool reduce risk or introduce it?

- Transparency: Do you understand how the tool arrives at its output?

- Fairness and equity: Could it disadvantage certain patients?

- Privacy and security: How is Patient Health Information (PHI) protected?

- Indigenous data sovereignty: Are tools respecting data governance in the Canadian context?

- AI literacy: Do clinicians and patients understand how the tool works enough to use it safely and interpret outputs responsibly?

You don’t need to be a data scientist to use AI safely; you just need a structured way to assess risk, benefits, and responsibilities.

Understanding Risk Levels in AI

Every week brings new AI scribes, triage assistants, predictive tools, chatbots, and workflow automations. But not all AI is equal, and not every tool belongs in every clinic.

Clinicians should start by asking five simple questions when evaluating any new AI solution:

- If it makes a mistake, what’s the impact on my patient?

- How autonomous is the tool?

- Does it access personal health information?

- Does it interact directly with patients?

- Does it influence my clinical decisions?

These questions help determine the risk level and what safeguards or approvals are needed.

Coming Soon: The Amplify Care Primary Care AI Framework

Responsible AI isn’t a barrier to innovation, but rather, it’s the foundation that will allow innovation to thrive.

To reduce uncertainty and help clinicians make informed, safe decisions, we’ve excited to share that we’ve developed the Amplify Primary Care AI Framework (coming soon!).

This framework will give clinicians and organizations a structured, step-by-step way to:

- Identify a clear use case

- Assess safety considerations

- Compare AI solutions objectively

- Verify that the chosen tool is safe and appropriate

- Implement and monitor ongoing use

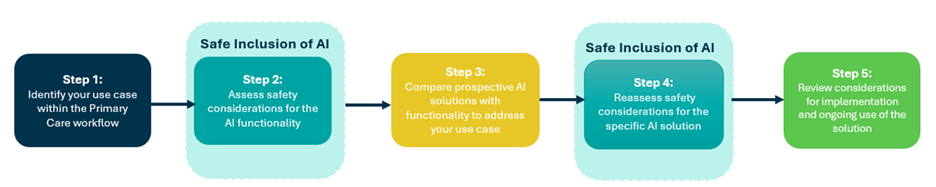

Sneak Peek: 5 Steps of the Framework

- Identify your primary care use case

- Assess safety considerations for the AI’s intended function

- Compare candidate AI tools

- Reassess safety considerations for the specific tool

- Plan for implementation and continuous oversight

Our goal is simple: To make AI adoption safer, clearer, and less overwhelming for clinicians.

After all, clinicians don’t need to become AI experts; they just need the right tools and frameworks to guide safe decision-making.

If you’d like to be alerted when the framework is released, please sign up for our AI Amplified news pulse below!

Interested in staying up to date with all things AI? Consider signing up for our AI Amplified news pulse, which isn’t the same as our monthly newsletter – rather, we send you need-to-know updates on AI as they arise.

AI Amplified: Sign up here

"*" indicates required fields

Get the latest resources and insights

-

eConsult and eReferral supporting the chronic pain pathway

One in five Canadians suffer from chronic pain1. Wait times for multidisciplinary chronic pain clinics…

-

How AI scribes enable better interactions with patients during appointments

AI scribes are improving patient-clinician interactions by reducing administrative tasks, allowing clinicians to be more…

-

eConsult: A case study on eConsult use in primary care to enable timely patient care with confidence

The median wait time from primary care provider referral to consultation with a specialist in…

-

eReferral and North East Assessment Centre central intake musculoskeletal process

In Canada, Orthopedic surgeons are considered the highest consulted specialists1 with more than 900,000 referred…